Tier data from on-premises ONTAP clusters to Google Cloud Storage in NetApp Cloud Tiering

Suggest changes

Suggest changes

Free space on your on-premises ONTAP clusters by tiering inactive data to Google Cloud Storage in NetApp Cloud Tiering.

Quick start

Get started quickly by following these steps, or scroll down to the remaining sections for full details.

Prepare to tier data to Google Cloud Storage

Prepare to tier data to Google Cloud StorageYou need the following:

-

A source on-premises ONTAP cluster that's running ONTAP 9.6 or later that you have added to the NetApp Console, and a connection over a user-specified port to Google Cloud Storage. Learn how to discover a cluster.

-

A service account that has the predefined Storage Admin role and storage access keys.

-

A Console agent installed in a Google Cloud Platform VPC.

-

Networking for the agent that enables an outbound HTTPS connection to the ONTAP cluster in your data center, to Google Cloud Storage, and to the Cloud Tiering service.

Set up tiering

Set up tieringIn the NetApp Console, select an on-premises system select Enable for the Tiering service, and follow the prompts to tier data to Google Cloud Storage.

Set up licensing

Set up licensingAfter your free trial ends, pay for Cloud Tiering through a pay-as-you-go subscription, an ONTAP Cloud Tiering BYOL license, or a combination of both:

-

To subscribe from the Google Cloud marketplace, go to the Marketplace offering, select Subscribe, and then follow the prompts.

-

To pay using a Cloud Tiering BYOL license, contact us if you need to purchase one, and then add it to the NetApp Console.

Requirements

Verify support for your ONTAP cluster, set up your networking, and prepare your object storage.

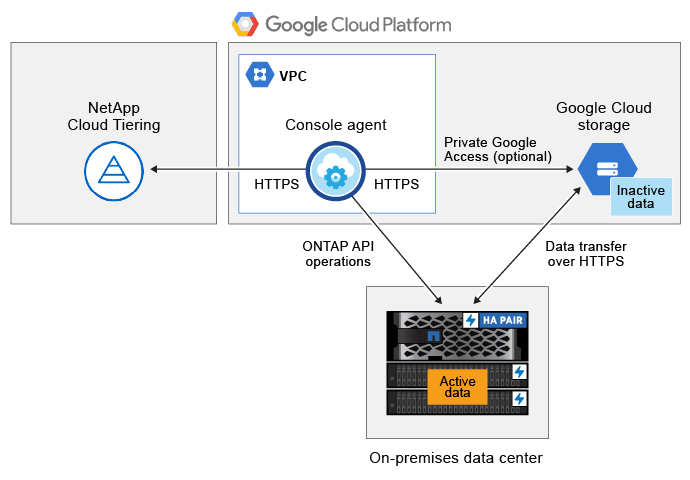

The following image shows each component and the connections that you need to prepare between them:

|

Communication between the agent and Google Cloud Storage is for object storage setup only. |

Prepare your ONTAP clusters

Your ONTAP clusters must meet the following requirements when tiering data to Google Cloud Storage.

- Supported ONTAP platforms

-

-

When using ONTAP 9.8 and later: You can tier data from AFF systems, or FAS systems with all-SSD aggregates or all-HDD aggregates.

-

When using ONTAP 9.7 and earlier: You can tier data from AFF systems, or FAS systems with all-SSD aggregates.

-

- Supported ONTAP versions

-

ONTAP 9.6 or later

- Cluster networking requirements

-

-

The ONTAP cluster initiates an HTTPS connection over port 443 to Google Cloud Storage.

ONTAP reads and writes data to and from object storage. The object storage never initiates, it just responds.

Although a Google Cloud Interconnect provides better performance and lower data transfer charges, it's not required between the ONTAP cluster and Google Cloud Storage. But doing so is the recommended best practice.

-

An inbound connection is required from the agent, which resides in a Google Cloud Platform VPC.

A connection between the cluster and the Cloud Tiering service is not required.

-

An intercluster LIF is required on each ONTAP node that hosts the volumes you want to tier. The LIF must be associated with the IPspace that ONTAP should use to connect to object storage.

-

- Supported volumes and aggregates

-

The total number of volumes that Cloud Tiering can tier might be less than the number of volumes on your ONTAP system. That's because volumes can't be tiered from some aggregates. Refer to the ONTAP documentation for functionality or features not supported by FabricPool.

|

Cloud Tiering supports FlexGroup volumes. Setup works the same as any other volume. |

Discover an ONTAP cluster

You need to add your on-premises ONTAP system to the NetApp Console before you can start tiering cold data.

Create or switch Console agents

A Console agent is required to tier data to the cloud. When tiering data to Google Cloud Storage, an agent must be available in a Google Cloud Platform VPC. You'll either need to create a new agent or make sure that the currently selected agent resides in Google Cloud.

Prepare networking for the Console agent

Ensure that the Console agent has the required networking connections.

-

Ensure that the VPC where the agent is installed enables the following connections:

-

An HTTPS connection over port 443 to the Cloud Tiering service and to your Google Cloud Storage (see the list of endpoints)

-

An HTTPS connection over port 443 to your ONTAP cluster management LIF

-

-

Optional: Enable Private Google Access on the subnet where you plan to deploy the agent.

Private Google Access is recommended if you have a direct connection from your ONTAP cluster to the VPC and you want communication between the agent and Google Cloud Storage to stay in your virtual private network. Note that Private Google Access works with VM instances that have only internal (private) IP addresses (no external IP addresses).

Prepare Google Cloud Storage

When you set up tiering, you need to provide storage access keys for a service account that has Storage Admin permissions. A service account enables Cloud Tiering to authenticate and access Cloud Storage buckets used for data tiering. The keys are required so that Google Cloud Storage knows who is making the request.

The Cloud Storage buckets must be in a region that supports Cloud Tiering.

|

If you are planning to configure Cloud Tiering to use lower cost storage classes where your tiered data will transition to after a certain number of days, you must not select any lifecycle rules when setting up the bucket in your GCP account. Cloud Tiering manages the lifecycle transitions. |

-

Create a service account that has the predefined Storage Admin role.

-

Go to GCP Storage Settings and create access keys for the service account:

-

Select a project, and select Interoperability. If you haven't already done so, select Enable interoperability access.

-

Under Access keys for service accounts, select Create a key for a service account, select the service account that you just created, and select Create Key.

You'll need to enter the keys later when you set up Cloud Tiering.

-

Tier inactive data from your first cluster to Google Cloud Storage

After you prepare your Google Cloud environment, start tiering inactive data from your first cluster.

-

Storage access keys for a service account that has the Storage Admin role.

-

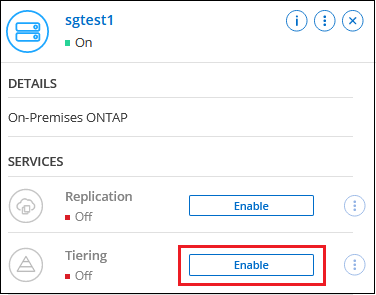

Select the on-premises ONTAP system.

-

Click Enable for the Tiering service from the right panel.

If the Google Cloud Storage tiering destination is available on the Systems page, you can drag the cluster onto the Google Cloud Storage system to initiate the setup wizard.

-

Define Object Storage Name: Enter a name for this object storage. It must be unique from any other object storage you may be using with aggregates on this cluster.

-

Select Provider: Select Google Cloud and select Continue.

-

Complete the steps on the Create Object Storage pages:

-

Bucket: Add a new Google Cloud Storage bucket or select an existing bucket.

-

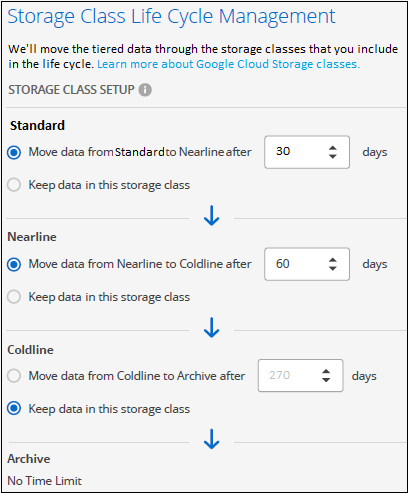

Storage Class Lifecycle: Cloud Tiering manages the lifecycle transitions of your tiered data. Data starts in the Standard class, but you can create rules to apply different storage classes after a certain number of days.

Select the Google Cloud storage class that you want to transition the tiered data to and the number of days before the data is assigned to that class, and select Continue. For example, the screenshot below shows that tiered data is assigned to the Nearline class from the Standard class after 30 days in object storage, and then to the Coldline class after 60 days in object storage.

If you choose Keep data in this storage class, then the data remains in the that storage class. See supported storage classes.

Note that the lifecycle rule is applied to all objects in the selected bucket.

-

Credentials: Enter the storage access key and secret key for a service account that has the Storage Admin role.

-

Cluster Network: Select the IPspace that ONTAP should use to connect to object storage.

Selecting the correct IPspace ensures that Cloud Tiering can set up a connection from ONTAP to your cloud provider's object storage.

You can also set the network bandwidth available to upload inactive data to object storage by defining the "Maximum transfer rate". Select the Limited radio button and enter the maximum bandwidth that can be used, or select Unlimited to indicate that there is no limit.

-

-

Click Continue to select the volumes that you want to tier.

-

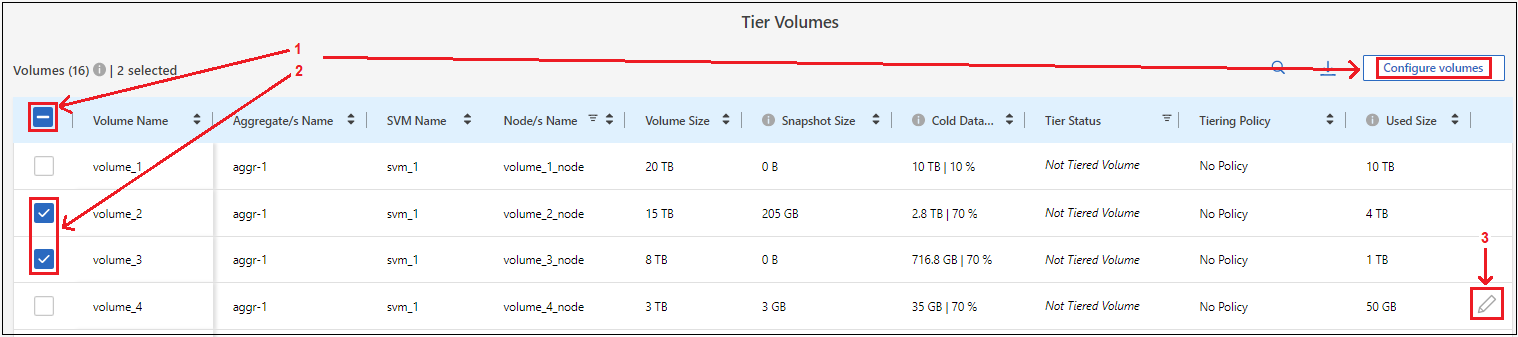

On the Tier Volumes page, select the volumes that you want to configure tiering for and launch the Tiering Policy page:

-

To select all volumes, check the box in the title row (

) and select Configure volumes.

) and select Configure volumes. -

To select multiple volumes, check the box for each volume (

) and select Configure volumes.

) and select Configure volumes. -

To select a single volume, select the row (or

icon) for the volume.

icon) for the volume.

-

-

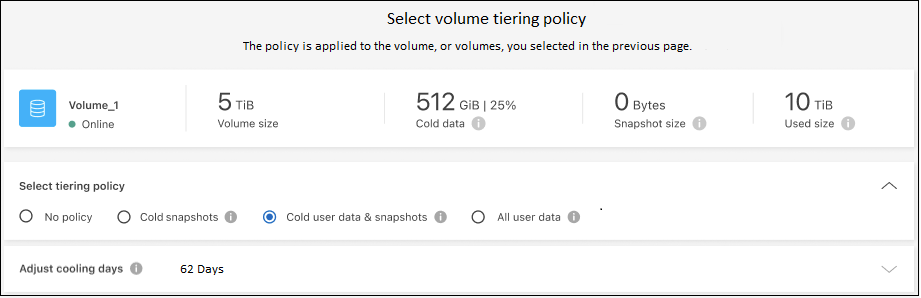

In the Tiering Policy dialog, select a tiering policy, optionally adjust the cooling days for the selected volumes, and select Apply.

You've successfully set up data tiering from volumes on the cluster to Google Cloud object storage.

You can review information about the active and inactive data on the cluster. Learn more about managing your tiering settings.

You can also create additional object storage in cases where you may want to tier data from certain aggregates on a cluster to different object stores. Or if you plan to use FabricPool Mirroring where your tiered data is replicated to an additional object store. Learn more about managing object stores.