Replace the caching module - FAS8300 and FAS8700

Suggest changes

Suggest changes

You must replace the caching module in the controller module when your system registers a single AutoSupport (ASUP) message that the module has gone offline; failure to do so results in performance degradation.

|

The Ver2 controller module has only one caching module socket in the FAS8300. FAS8700 does not have a VER2 controller module. The caching module functionality is not impacted by the socket removal. |

-

You must replace the failed component with a replacement FRU component you received from your provider.

Step 1: Shut down the impaired controller

You can shut down or take over the impaired controller using different procedures, depending on the storage system hardware configuration.

To shut down the impaired controller, you must determine the status of the controller and, if necessary, take over the controller so that the healthy controller continues to serve data from the impaired controller storage.

If you have a cluster with more than two nodes, it must be in quorum. If the cluster is not in quorum or a healthy controller shows false for eligibility and health, you must correct the issue before shutting down the impaired controller.

You might want to erase the contents of your caching module before replacing it.

-

Although data on the caching module is encrypted, you might want to erase any data from the impaired caching module and verify that the caching module has no data:

-

Erase the data on the caching module:

system controller flash-cache secure-erase run -node node name localhost -device-id device_numberRun the system controller flash-cache showcommand if you don't know the Flash Cache device ID. -

Verify that the data has been erased from the caching module:

system controller flash-cache secure-erase show

-

-

If AutoSupport is enabled, suppress automatic case creation by invoking an AutoSupport message:

system node autosupport invoke -node * -type all -message MAINT=_number_of_hours_down_hThe following AutoSupport message suppresses automatic case creation for two hours:

cluster1:*> system node autosupport invoke -node * -type all -message MAINT=2h -

Disable automatic giveback from the console of the healthy controller:

storage failover modify –node local -auto-giveback false -

Take the impaired controller to the LOADER prompt:

If the impaired controller is displaying… Then… The LOADER prompt

Go to the next step.

Waiting for giveback…

Press Ctrl-C, and then respond

y.System prompt or password prompt (enter system password)

Take over or halt the impaired controller:

storage failover takeover -ofnode impaired_node_nameWhen the impaired controller shows Waiting for giveback…, press Ctrl-C, and then respondy.

To shut down the impaired controller, you must determine the status of the controller and, if necessary, switch over the controller so that the healthy controller continues to serve data from the impaired controller storage.

-

You must leave the power supplies turned on at the end of this procedure to provide power to the healthy controller.

-

Check the MetroCluster status to determine whether the impaired controller has automatically switched over to the healthy controller:

metrocluster show -

Depending on whether an automatic switchover has occurred, proceed according to the following table:

If the impaired controller… Then… Has automatically switched over

Proceed to the next step.

Has not automatically switched over

Perform a planned switchover operation from the healthy controller:

metrocluster switchoverHas not automatically switched over, you attempted switchover with the

metrocluster switchovercommand, and the switchover was vetoedReview the veto messages and, if possible, resolve the issue and try again. If you are unable to resolve the issue, contact technical support.

-

Resynchronize the data aggregates by running the

metrocluster heal -phase aggregatescommand from the surviving cluster.controller_A_1::> metrocluster heal -phase aggregates [Job 130] Job succeeded: Heal Aggregates is successful.

If the healing is vetoed, you have the option of reissuing the

metrocluster healcommand with the-override-vetoesparameter. If you use this optional parameter, the system overrides any soft vetoes that prevent the healing operation. -

Verify that the operation has been completed by using the metrocluster operation show command.

controller_A_1::> metrocluster operation show Operation: heal-aggregates State: successful Start Time: 7/25/2016 18:45:55 End Time: 7/25/2016 18:45:56 Errors: - -

Check the state of the aggregates by using the

storage aggregate showcommand.controller_A_1::> storage aggregate show Aggregate Size Available Used% State #Vols Nodes RAID Status --------- -------- --------- ----- ------- ------ ---------------- ------------ ... aggr_b2 227.1GB 227.1GB 0% online 0 mcc1-a2 raid_dp, mirrored, normal...

-

Heal the root aggregates by using the

metrocluster heal -phase root-aggregatescommand.mcc1A::> metrocluster heal -phase root-aggregates [Job 137] Job succeeded: Heal Root Aggregates is successful

If the healing is vetoed, you have the option of reissuing the

metrocluster healcommand with the -override-vetoes parameter. If you use this optional parameter, the system overrides any soft vetoes that prevent the healing operation. -

Verify that the heal operation is complete by using the

metrocluster operation showcommand on the destination cluster:mcc1A::> metrocluster operation show Operation: heal-root-aggregates State: successful Start Time: 7/29/2016 20:54:41 End Time: 7/29/2016 20:54:42 Errors: - -

On the impaired controller module, disconnect the power supplies.

Step 2: Remove the controller module

To access components inside the controller module, you must remove the controller module from the chassis.

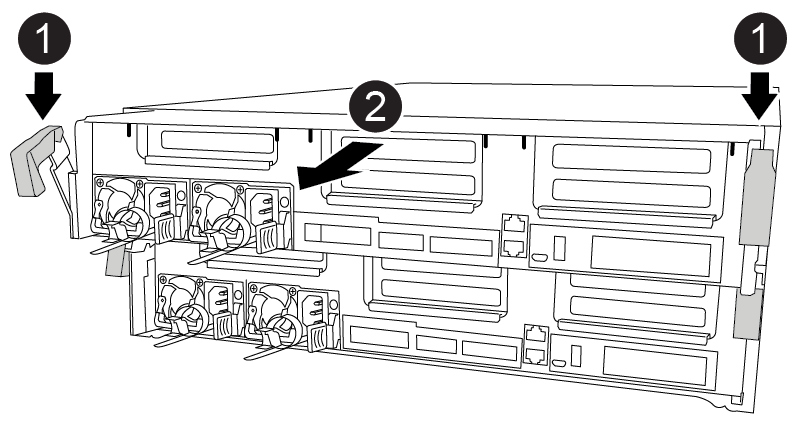

You can use the following animation, illustration, or the written steps to remove the controller module from the chassis.

-

If you are not already grounded, properly ground yourself.

-

Release the power cable retainers, and then unplug the cables from the power supplies.

-

Loosen the hook and loop strap binding the cables to the cable management device, and then unplug the system cables and SFPs (if needed) from the controller module, keeping track of where the cables were connected.

Leave the cables in the cable management device so that when you reinstall the cable management device, the cables are organized.

-

Remove the cable management device from the controller module and set it aside.

-

Press down on both of the locking latches, and then rotate both latches downward at the same time.

The controller module moves slightly out of the chassis.

-

Slide the controller module out of the chassis.

Make sure that you support the bottom of the controller module as you slide it out of the chassis.

-

Place the controller module on a stable, flat surface.

Step 3: Replace a caching module

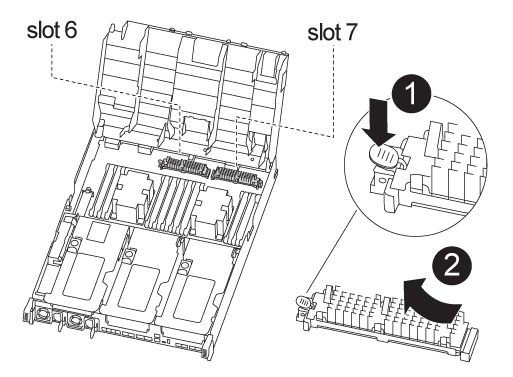

To replace a caching module, referred to as the Flash Cache on the label on your controller, locate the slot inside the controller and follow the specific sequence of steps. See the FRU map on the controller module for the location of the Flash Cache.

|

Slot 6 is only available in FAS8300 VER2 Controller. |

Your storage system must meet certain criteria depending on your situation:

-

It must have the appropriate operating system for the caching module you are installing.

-

It must support the caching capacity.

-

Although the contents of the caching module is encrypted, it is a best practice to erase the contents of the module before replacing it. For more information, see the Statement of Volatility for your system on the NetApp Support Site.

You must log into the NetApp Support Site to display the Statement of Volatility for your system. -

All other components in the storage system must be functioning properly; if not, you must contact technical support.

You can use the following animation, illustration, or the written steps to replace a caching module.

-

If you are not already grounded, properly ground yourself.

-

Open the air duct:

-

Press the locking tabs on the sides of the air duct in toward the middle of the controller module.

-

Slide the air duct toward the back of the controller module, and then rotate it upward to its completely open position.

-

-

Using the FRU map on the controller module, locate the failed caching module and remove it:

Depending on your configuration, there may be zero, one, or two caching modules in the controller module. Use the FRU map inside the controller module to help locate the caching module.

-

Press the blue release tab.

The caching module end rises clear of the release tab.

-

Rotate the caching module up and slide it out of the socket.

-

-

Install the replacement caching module:

-

Align the edges of the replacement caching module with the socket and gently insert it into the socket.

-

Rotate the caching module downward toward the motherboard.

-

Placing your finger at the end of the caching module by the blue button, firmly push down on the caching module end, and then lift the locking button to lock the caching module in place.

-

-

Close the air duct:

-

Rotate the air duct down to the controller module.

-

Slide the air duct toward the risers to lock it in place.

-

Step 4: Install the controller module

After you have replaced the component in the controller module, you must reinstall the controller module into the chassis.

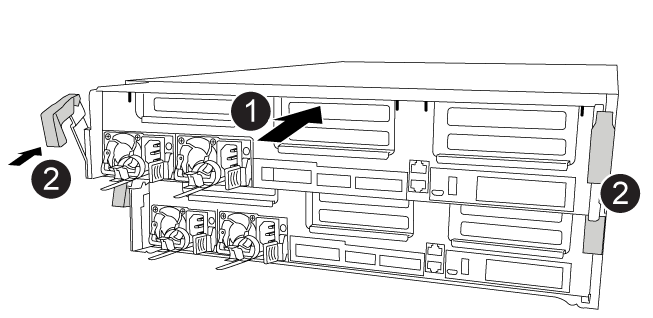

You can use the following animation, illustration, or the written steps to install the controller module in the chassis.

-

If you have not already done so, close the air duct.

-

Align the end of the controller module with the opening in the chassis, and then gently push the controller module halfway into the system.

Do not completely insert the controller module in the chassis until instructed to do so. -

Cable the management and console ports only, so that you can access the system to perform the tasks in the following sections.

You will connect the rest of the cables to the controller module later in this procedure. -

Complete the installation of the controller module:

-

Using the locking latches, firmly push the controller module into the chassis until the locking latches begin to rise.

Do not use excessive force when sliding the controller module into the chassis to avoid damaging the connectors. -

Fully seat the controller module in the chassis by rotating the locking latches upward, tilting them so that they clear the locking pins, gently push the controller all the way in, and then lower the locking latches into the locked position.

-

Plug the power cords into the power supplies, reinstall the power cable locking collar, and then connect the power supplies to the power source.

The controller module begins to boot as soon as power is restored. Be prepared to interrupt the boot process.

-

If you have not already done so, reinstall the cable management device.

-

Interrupt the normal boot process and boot to LOADER by pressing

Ctrl-C.If your system stops at the boot menu, select the option to boot to LOADER. -

At the LOADER prompt, enter

byeto reinitialize the PCIe cards and other components.

-

Step 5: Restore the controller module to operation

You must recable the system, give back the controller module, and then reenable automatic giveback.

-

Recable the system, as needed.

If you removed the media converters (QSFPs or SFPs), remember to reinstall them if you are using fiber optic cables.

-

Return the controller to normal operation by giving back its storage:

storage failover giveback -ofnode impaired_node_name -

If automatic giveback was disabled, reenable it:

storage failover modify -node local -auto-giveback true

Step 7: Switch back aggregates in a two-node MetroCluster configuration

This task only applies to two-node MetroCluster configurations.

-

Verify that all nodes are in the

enabledstate:metrocluster node showcluster_B::> metrocluster node show DR Configuration DR Group Cluster Node State Mirroring Mode ----- ------- -------------- -------------- --------- -------------------- 1 cluster_A controller_A_1 configured enabled heal roots completed cluster_B controller_B_1 configured enabled waiting for switchback recovery 2 entries were displayed. -

Verify that resynchronization is complete on all SVMs:

metrocluster vserver show -

Verify that any automatic LIF migrations being performed by the healing operations were completed successfully:

metrocluster check lif show -

Perform the switchback by using the

metrocluster switchbackcommand from any node in the surviving cluster. -

Verify that the switchback operation has completed:

metrocluster showThe switchback operation is still running when a cluster is in the

waiting-for-switchbackstate:cluster_B::> metrocluster show Cluster Configuration State Mode -------------------- ------------------- --------- Local: cluster_B configured switchover Remote: cluster_A configured waiting-for-switchback

The switchback operation is complete when the clusters are in the

normalstate.:cluster_B::> metrocluster show Cluster Configuration State Mode -------------------- ------------------- --------- Local: cluster_B configured normal Remote: cluster_A configured normal

If a switchback is taking a long time to finish, you can check on the status of in-progress baselines by using the

metrocluster config-replication resync-status showcommand. -

Reestablish any SnapMirror or SnapVault configurations.

Step 8: Complete the replacement process

Return the failed part to NetApp, as described in the RMA instructions shipped with the kit. See the Part Return and Replacements page for further information.